Friday, December 14, 2007

Nerd #5

Josh is a third year student of linguistics and music. He's got a great ear and eye for interesting language data, and is also just a swell guy. Glad to have you with us, Josh!

Sunday, November 18, 2007

Max Headroom in the phonetics lab

First of all, from the NS article, it looks like the Boston University Speech Lab is responsible for this research, but I can't find any reference to the project on their website. The description is of an invasive technique, implanting electrodes directly in poor MH's brain. (His real name seems to be Eric, but one would think they'd have changed it for the purposes of reporting the research anyway, so I'm going to stick with MH.) Sadly, their computer can't recognize 'N-N-New Coke' in the output of this little bundle of neurons, but it can apparently distinguish /u/, /o/, and /i/, and do so with 80% accuracy. Evidently they tell MH to 'think hard about saying /u/' and he complies in his comatose state. Until the details come out, I'll be a bit skeptical about what's really going on.

Nevertheless, what is there on the BU speech lab website is interesting: first, brain imaging data for speech production, most notably the apparent location in the brain of certain articulatory signals. Second, a computer model which they use to situate what they think these groups of neurons are doing. I won't evaluate either one, but if we believe their brain maps, and if we believe they've stuck the electrodes in the right place, then it seems like they are really reading off something like articulatory information.

This is somewhat interesting once you realize that the actual form that articulatory information takes is still up for debate. On the one hand, there's the fairly obvious theory that, when we speak, we just send instructions to the articulators. Of course, if sounds are stored in this articulatory format, then perception must involve something like the Motor Theory of speech perception, which means that you have some (presumably built-in) hardware for matching up speech sounds that you hear with the gestures that produced them. This is how you match up the sounds you hear with stored forms, which just tell you what to do.

On the other hand, a goal-based theory of production (subscription to Journal of Phonetics required) says something like the reverse. The information you need to send to the motor system is (mostly) a bunch of acoustic targets. You might also have articulatory targets, but the key thing is that you can just send 'I want a low f2' to the low-level system and it will work it out automatically, presumably, again through some built in mapping. So when we map out what parts of the brain are lighting up when we say /i/ etc, we shoul consistently see things corresponding to these more abstracted features. If we don't, then we can't tell whether the goal-based theory is right.

Of course, this is not so easy to tell for /i/, since the mapping between acoustics and articulation for the vowel space is fairly trivial, but if we had enough brain data we should in principle be able to tell; do the bits that light up for particular sounds in production seem to correlate with acoustics or articulation? Clearly, the articulatory signals have to be there, but if we can't see the acoustics, we don't have any reason to believe in the goal-based theory. This is factoring out any methodological concerns, of course, which I take it are acute with brain imaging. But it would be interesting to look (and if anyone wants to hunt through the stuff on the BU Speech Lab web site and try and find data bearing on this question feel free).

Monday, November 12, 2007

Bemoaning the Loss of English Subjuctive

'“It’s not saying the ‘20-Hour Workweek,’” Mr. Bronson explained. “That would be something that lots of people can live. It’s 40 hours a week versus four. It’s very important in the tech world that consequences are exponential, not geometrical.”'

So what does he mean? Does he mean that in the tech world, concequences are defined as being exponential, not geometric (declarative reading)? Or that the tech world isn't going to care unless the consequences are exponential (subjunctive reading)?

Now, if English still had a subjunctive in regular use, I would know: if he meant this subjuctively he would have said "It’s very important in the tech world that consequences be exponential, not geometrical." Since he didn't, this is declarative. Unfortunately, I think he might actually have intended this to be read subjunctively.

Now, I know what you're thinking: wait a minute, aren't linguists supposed to be all desciptivist and shun prescriptivism? Well, to that I say two things:

1. I come from an intensely prescriptivist background. It's not that easy to throw off, you know!

2. The main reason I'm posting this is to talk about language change. What happens when a change causes confusion? Doesn't it usually ultimately fail? Or, something comes in to take its place?

It seems to me that the loss of the subjunctive is too far along to reverse itself. Once language change gets going in earnest, there doesn't seem to be any stopping it. But if situations like this keep coming up, where I can't figure out from context which of two ambiguous meanings was intended, can we expect to see something come in to take its place? Maybe a slightly awkward injection of a modal, like "It’s very important in the tech world that consequences should be exponential, not geometrical"?

Wednesday, October 17, 2007

Syntax or Lexicon?

So, I've got a question. A rather vague "how do you feel about this approach?" question, that's been a bit of a topic in my syntax class.

I've recently been trying to make my way through"The Normal Course of Events" by Hagit Borer. One of her main themes is that several phenomena traditionally accounted for through lexical specification should actually be accounted for through structural/syntactic means.

For instance, Borer argues that the difference between mass nouns and count nouns, which is traditionally accounted for by stating that each noun in the lexicon is specified as either mass or count[1], is actually structurally represented, so that count nouns have more functional structure than mass nouns.

This is extended to the verbal domain as well. So the difference between unergative and unaccusative verbs, argues Borer, is not a lexical difference, such that "run" is specified as an "unergative" verb, while "arrive" is specified as an "unaccusative" verb, but is actually a structural difference such that unergative verbs have more functional structure than unaccusatives.

And again, the difference between telic and atelic verbs is that telic verbs have more functional structure than atelic verbs.

So what do you think about this approach that consists of taking all of the information out of the lexicon and instead representing it structurally? Like it or don't like it? Good move or bad move? Why or why not?

As for me, going with my gut feeling, I kind of like the idea that everything is structurally represented (so that even a notion like "verb" and "noun" is derived in the syntax). But then I wonder: what does such an approach mean for languages like Yucatec Mayan?

In Yucatec Mayan, there are (at least) two types of intransitive verbs: inherently telic, and inherently atelic intransitives. The inherently telic verbs, when unmarked, are interpreted as (hence the name) telic. They need to be overtly marked by an atelic morpheme to be interpreted as atelic. The inherently atelic verbs, on the other hand, when unmarked, are interpreted as atelic, and they require an overt telic morpheme to be interpreted as telic.

So if Borer is arguing that atelicity is universally represented by less functional structure, what does it mean to have these inherently telic verbs, where an overt morpheme marks atelicity, and telicity is unmarked?

Now, I have yet to fully read Borer (and have the suspicion that her theory actually may be able to account for this [2]) but at first glance this seems problematic, IF I assume that overt morphological marking corresponds to more functional structure.

So yes, two questions: What do you think of having all the information represented structurally, as opposed to being listed in the lexicon, and is the assumption that overt morphological marking corresponds to more functional structure valid?

--------------------------------------------------------

[1] On a random note...why is "e-mail" a count noun, but "mail" a mass noun?

[2] My paper topic for this syntax class! It's getting somewhat ridiculous: I don't know how to get around using the following phrase "inherently atelic (i.e. possible unergative) intransitives," but really, there ought to be a limit to the number of morphological negations you can use in a single noun phrase...

Friday, October 5, 2007

The Ig Nobel Prize in Linguistics

Source: http://news.bbc.co.uk/2/hi/science/nature/7026150.stm

Presumably this means they could tell the difference between Dutch and Japanese spoken forward...

We've got to get going on this blog again!

Tuesday, June 19, 2007

Another thing...

So now what I'm asking: Does anyone know someplace else where someone might expect an existential quantifier, in a language like English?

And just because I like to complain, UBC says that this article on (supposed) existential polarity items in Chinese is (supposedly) available full-text online, but the referred site keeps asking me for $32.00! Now I might have to do something ridiculous, like actually, physically, go into the library and find the journal...

Monday, June 11, 2007

Trying to piece together an analysis

Now, this research is what I've been focusing on for the past two years, and it's very dear to me, but I've been reluctant to post on this stuff before. This is because the relevant data really belongs to my language consultant, not me, and I don't think it's right to just splash it all over the internet. But I think I've managed to write it in a way that avoids this problem since I don't actually provide any data.

So here is my full-of-holes-and-faulty-logic working analysis:

Assumption 1: In IE languages like English, semantic truth-values are encoded through Tense, or IP, in the clausal (verbal) domain. (Kearns 2000)

Messy Assumption 2: The semantic equivalent of truth-values in the nominal domain is existence, or referentiality. (Because I am, er, semantically-challenged, I'm not sure what the difference between existence and referentiality is. )

Now, here is a hole. Is this assumption justifiable? I figure that nominal elements don't really have truth-values, but something more like an existential, or referential value. Or looking at it the other way, a noun has (or doesn't have) a referential value, and the clausal/verbal is considered 'true' if event being referred to exists, and is 'false' if the event doesn't exist. Does that make sense? Man, I really wish there were more undergraduate classes in semantics. But anyways, assuming that the above, er, assumption [i], is justified, I'll move on with the holey analysis...

Generalization 1: NPIs are known for having existential narrow-scope - they are non-referential (Progovac 1994, Uribe-Etxebarria 1996)

Assumption 3: Blackfoot lacks the syntactic node Tense, utterances instead being anchored deictically via a Participant (as in Speech Act Participant) node (Ritter & Wiltschko 2005).Now, here's where my (poor excuse of an) analysis get's really vague and holey. I have a load of questions. If Blackfoot doesn't have the syntactic node Tense, how are semantic truth-values encoded? Or are they even encoded? Because there's this idea that several languages do not ASSERT information, but instead PRESENT information (or so I gathered from my LING 447 evidentials seminar...but have yet to find a citable reference) Does the Participant node encode truth-values, or some other semantic, perhaps speech act participant-related, property being encoded?

BUT anyways, if I jump over that giant hole in my analysis, and assume that there is some kind of Participant-related-semantic feature encoded by Participant node in the clausal/verbal domain, the simplest stab at what the nominal equivalent would just be Participant. Right? Because while it doesn't really make sense for nouns to have a +/- truth-value, they can certainly have a +/- SpeechActParticipant value.

Now putting this all together, one might predict that Blackfoot NPIs wouldn't have an existential property within the scope of negation, but instead have a parallel SpeechActParticipant property within the scope of negation. (Which, of course, I already know is the case, and I'm just pretending is a prediction for presentation's sake...)

So, despite the giant holes, does this analysis make sense? Is it interesting? I hope it's interesting...

And a question from this: How might such a semantic 'SpeechActParticipant' property manifest in other parts of the language? What kind of predictions does this analysis make? How cool would it be if this language didn't quantify over worlds, but instead over deictic spheres? Or something like that...

----------------------------------------

[i] I know Giannakidou's 1998 notion of semantic (non)veridicality (which is directly related to truth-values) translates (non)veridicality onto the nominal domain (with determiners and quantifiers) in terms of whether the denotation of the NP is nonempty or not...which kind of feels like the same sort of thing I'm trying to say. Except, of course, the semantic terminology is a giant barrier in my understanding. See now, I really could benefit from a 'Semantics Awareness Week'...

(Hey, they have those wristbands for just about anything. Why not this?)

(Hey, they have those wristbands for just about anything. Why not this?)

Wednesday, June 6, 2007

Linguistic Ecumenism

Today I'm going to talk about linguistic ecumenism.

In my two years at the fringes of the linguistics academic community, one thing I notice over and over again is that different fields of linguistics don't talk to each other. I believe the reasons for this include the following:

1. Time. Who has time to read a lot of research in her own field, much less the less-relevant fields?

2. Disdain. It is easy to get dogmatic about something you know a fair bit, but not a huge amount, about. My first exposure to linguistics was Chomskian*, and the arguments for it are quite conviincing. The arguments against it are not given. There are plenty of structural linguists, who, with good reason, think sociolinguistics is premature: how can we study language variation when we don't even know what language is? And there are plenty of sociolinguists who, also with good reason, think structural linguistics is oversimplifying to the point of failing to capture anything about language. Etc.

3. Ignorance. Linguists who know nothing of a given subfield of their discipline are likely to fail to see the value of it, or even fail to notice its existence.

Another thing I've noticed is that there are many students, especially at the undergrad level, who are still interested in subfields of linguistics that are not their specialty. I've spoken to many young linguists on this very topic, and while some are somewhat agreeable for the sake of being friendly or polite, and throw in half-joking comments like "well, sure, except for [insert derided subfield/theory here]", many are in full agreement. Enough that I say we start an explicit movement.

THE ECUMENICAL LINGUISTICS MANIFESTO

A spectre is haunting linguistics -- the spectre of Ecumenism. All the powers of old linguistics have entered into a holy alliance to exorcise this spectre: prof and chair, Chomsky and Labov, MIT dogmatics and UCLA postmodernists.

Where is the theory in opposition that has not been decried as foolish by its opponents at MIT?

Two things result from this fact:

I. Ecumenism is already acknowledged by all traditional academic powers to be itself a power.

II. It is high time that Ecumenical Linguists should openly, in the face of the whole linguistic world, publish their views, their aims, their tendencies, and meet this nursery tale of the spectre of ecumenism with a manifesto of the movement itself.

To this end, imaginary Ecumenical Linguists of various institutions have assembled in my head and sketched the following manifesto, to be published in English, IPA and possibly French if we feel like it.

We have laboured under the pretense that separate subfields of linguistcs are in conflict with one another. No more! We must recognise that linguistics is a science, and therefore (1) is virtually always wrong, and (2) must by definition be constantly open to refutation, alternative theories and new data. A good scientist is excited by problematic data and opposing viewpoints. A good scientist struggles against her ego in the service of the search for knowledge. Brothers and sisters, the ego must not win!

We, the new generation of linguists, must rise up against this dogmatic separation! We must fight for the time to keep up on research from outside our tiny specialties! We must fight down the egoistic need to be right, and embrace opposition, debate, and cooperation!

ECUMENICAL LINGUISTS OF THE WORLD, UNITE!

* A math prof once told me that the greatest compliment a mathematician can get is for his name to be used as an ordinary noun: uncapitalised and subject to morphology. Chomsky's halfway there.

Saturday, May 19, 2007

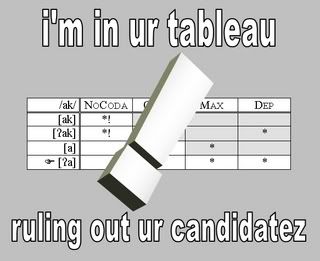

On Things That Are Awesomer than Cat Macros:

(Via Phonoloblog.)

The best thing ever? Perhaps.

I beat up Michael, but *I beat up him

A data set I came up with during yesterday's car trip:

(1)

a. I beat him/them/you up (twice a week).

b. *I beat up him/them/you (twice a week).

c. We beat each other up (twice a week).

d * We beat up each other (twice a week).

e. We beat ourselves up (twice a week).

f. *We beat up ourselves (twice a week).

g. Jon and Michael beat each other up (twice a week.)

h. *Jon and Michael beat up each other (twice a week).

(2)

a. I beat Michael up (twice a week).

b. I beat up Michael (twice a week).

(3)

a. I beat the/a boy up (twice a week).

b. I beat up the/a boy (twice a week).

(4)

a. I beat all the boys up (twice a week).

b. I beat up all the boys (twice a week).

c. I beat all boys up.

d. I beat up all boys.

e. I beat boys up (twice a week).

f. I beat up boys (twice a week).

(5)

a. I beat each boy up (twice a week)

b. I beat up each boy (twice a week)

(6)

a. I beat his brother up (twice a week).

b. I beat up his brother. (twice a week).

(7)

a. I beat someone up (twice a week).

b. ?I beat up someone (twice a week).

(8)

a. ??I beat no one up.

b. ??I beat up no one.

c. I didn't beat up anyone.

d. I didn't beat anyone up.

So what's the generalization? At first I thought that the elements that cannot appear outside of 'beat up' were the elements that require some kind of antecedent, or discourse context, in order to be meaningful, but doesn't the data in (6) also require a previously established reference for 'his'? But then again, maybe that doesn't matter because 'his' is not an argument of the verb...

Someone has probably already figured this out. Perhaps I will google it...

Tuesday, May 15, 2007

Lovely diagram...

I, er, just wanted to share the beautiful diagram I made[1], which I will include in the PPT presentation for my honours thesis defence tomorrow.

I, er, just wanted to share the beautiful diagram I made[1], which I will include in the PPT presentation for my honours thesis defence tomorrow.------------------

[1] Meaghan Fowlie gave me the idea that there was a hierarchy involved with these semantic properties, hence the sweet vertical formatting.

Wednesday, April 25, 2007

Prescriptivist Tenses...

I use this 'Stumble' extension for Firefox, which randomly sends you to webpages that others have tagged as interesting. Today it sent me to this page. Let me warn you - it's a prescriptivist rant. [insert your own spiel about how prescriptivism is wrong]. What I found fascinating is the fact that people rant about these things without researching them first. If I were to rant about something, I would research it first, you know, to cover my bases. So here's an excerpt:

#27: UNEXPLAINED CONDITIONALS: I'm baffled by the now almost universal use in the U.S. of the conditional tense where no condition appears to exist to justify it. For example, it is now apparently standard practice to thank people conditionally, as in "I would like to thank Joe Smith for all his help." The use of "would" suggests an unspoken condition, as in "I would like to thank Joe Smith for all his help but I'm not going to" and prompts the response, "What's stopping you?" "I want to thank ....." is no better. Just say "Thank you, Joe Smith, for all your help" and slip him the plain envelope with the cash. November 23, 2005.

Now, I know that people usually talk about a 'conditional mood' as opposed to a 'conditional tense', and I know that I hear the counterfactual conditional being used to convey politeness all of the time. But what I don't know (shamefully), is what 'mood' is. I know Tense, according to Reichenbach, is the relationship between the utterance time UT and the reference time RT. But in order to provide a definition for mood I'll need to do some googling...

(cue wavy lines and 'passing of time' music)

Definition 1:

"Mood

SYNTAX: cover term for one of the four inflectional categories of verbs (mood, tense, aspect, and modality). The most common categories are associated with the way sentences are used: indicative (statement), imperative (command), optative (wish), etc. Sometimes the distinction between declaratives (I go) and interrogatives (Do I go?) is considered one of mood. "

Source: http://www2.let.uu.nl/UiL-OTS/Lexicon/

Definition 2:

"Mood is one of a set of distinctive forms that are used to signal modality" where "modality is a facet of illocutionary force, signaled by grammatical devices (that is, moods), that expresses" either "the illocutionary point or general intent of a speaker, or a speaker’s degree of commitment to the expressed proposition's believability, obligatoriness, desirability, or reality. "

Source: SIL

Definition 3:

"unlike modals, mood markers do not have quantificational force of their own; their main function is to add a presupposition about the type of conversational background that is involved in the modal interpretation of the sentence."

Source: Matthewson et. al 2005:12, on Portner 1997

So, er, while I still don't know exactly what 'mood' is, I think the common theme in the above definitions are that mood is what results when something is morphologically or syntactically used to encode elements of the F-domain, where F consists of the illocutionary force and illocutionary context. (where Speech Act = illocutionary force + illocutionary context + propositional content). Obviously that needs to be ironed out a bit, because I think the vagueness of that definition allows the encoding of information structure like Topic and Focus to also be considered as moods markers..which I'm not sure is what I want.If anyone wants to tell me what mood actually is, I'd be grateful!

Saturday, April 21, 2007

Post-nominal 'of'-phrases

~

A question for L1 English speakers: Do you agree with the judgments given below?

(113)*The imposition of the government of a fine

(114) The government's imposition of a fine

These are stolen from David Adger's 'Core Syntax', by the way. The generalization is that Agents cannot be realized as post-nominal 'of'-phrases, but for me, in my[1] brand of English, (113) is grammatically well-formed. It's just stylistically disgusting. Is this just me, or do other people get this too?

[1] evidently nonstandard

Friday, April 13, 2007

A (lexical) semantic or syntactic definition?

Buahahah, another reason to avoid preparing for my anthropology exam (and I feared I had exhausted them all...)

If we're talking about evidentials, I feel like I have to point out that evidentials are not restricted to assertions - some languages (although this is typologically rare) allow evidentials to tack onto questions and imperatives, and for this reason I prefer to use Faller 2002's broad definition of an evidential. She defines evidentials are as elements that "encode the grounds for making a speech act," which for the speech acts of the type 'assertion', are typically one's information source (Faller 2002:81). Just to frame things, Faller defines epistemic modality as referring to a judgment of possibility or necessity regarding the truth of a proposition (Faller 2002:81). The main difference she makes between evidentials and epistemic modals, as I read it, is whether or not an element encodes as opposed to implicates the relevant semantic material. (Whether or not this can be conflated with Matthewson et. al 2005's notion of 'fixed' versus 'context-varying' values is a headache I feel I should have figured out but still haven't). Basically, an evidential encodes one's grounds for making a speech act, and may or may not implicate judgments/evaluations regarding the the validity of the speech act. An epistemic modal encodes a judgment/evaluation, and may or may not implicate one's grounds for making the speech act. And, as Aislin mentioned, language can be sticky in that an element might encode both. So, if Aislin's in De Haan's camp, you can say that I'm camping out with Faller. I like her definitions.

Now, my issue is not so much how these semantic definitions hold up to other semantic definitions, but how these semantic definitions correspond with the syntactic tests used to categorize an element as either an evidential or an epistemic modal. Tests for evidentials often involve trying to embed them under some sort of propositional operator (such as my favourite propositional operator, negation), where dedicated evidentials are supposed to scope outside of the propositional content, and epistemic modals are ...well...tricky. Some of them can scope under negation (eg. English 'can') but some of them scope outside of negation (eg. English 'must')...but generally, if something scopes under a propositional operator, it's probably a modal (sufficient, but not necessary). At least this is the impression I get. My issue with this is that it seems to assume i) that a notion like syntactic c-command at LF correlates with semantic scope, and ii) that an element is either under the scope of a propositional operator, or outside of the scope of a propositional operator.

ii) is the one that makes me think the most. As noted above, things can encode more than one kind of semantic material (like English tense/aspect being conflated in the Perfect, etc.). So say you have a morpheme /MORPHEME/ that encodes both a semantic feature A and also another semantic feature B. What stops A from escaping the scope of a propositional operator while B stays within the aforementioned propositional operator's scope? Am I just a crazy person, or does this make sense to other people too?

On a side note...I'd also like to welcome Meaghan Fowlie to the blog!

And another side note: I am a dork, so when I saw this footnote:

**ie, by me, in my arrogant undergrad seminar papers. But by actual, non-proto linguists, as well

It made me think that Aislin was reconstructed from comparative research on extant linguists, and should really be denoted as *Aislin. (Man, linguists get a lot of mileage out of that asterix...remember phrase structure rules where a star AFTERWARDS means recursive???)

And since three is a good number, another side note: I totally want a linguistics t-shirt, but am torn between the several options of what to put on the t-shirt...whether to do an "I *heart* Saussurre", or something that could be taken entirely the wrong way by non-linguists like "I raise my diphthongs before voiceless obstruents"...

Fun with derivational morphology!

"It's a word we in the media like to trot out today: paraskevidekatriaphobia [pronounced pair-uh-skee-vee-dek-uh-tree-uh-FOH-bee-uh] -- the excessive, and sometimes morbid fear of Friday the 13th.

"We like it, first, because it's such an impressive-sounding word -- it takes some doing to make all those syllables trip elegantly off the tongue".

Interesting tidbit: "The word 'paraskevidekatriaphobia' was devised by Dr. Donald Dossey who told his patients that 'when you learn to pronounce it, you're cured!'"

A welcome and a few words on evidentiality

Meagan (not to be confused with Meaghan, above,) has recently been complaining that no one else has been posting, and that she feels lonely and awkward. Like, one might imagine, a girl who went to a junior high school dance with a bunch of friends, and is now sitting miserably on a bench beside the DJ's table because everyone's ditched her to go kiss boys on the soccer field, and she hates this song, and she didn't want to come, anyway. Not that that's ever happened or anything.

So I thought I'd drop a few words about evidentiality, a remarkably contentious subject that we've been studying in a seminar this past semester, and then we can argue about it. The definition of evidentiality is somewhat contested, but most people agree that it's concerned with linguistically encoding information source. For example, in her 2004 monograph on the subject, Aikhenvald divided evidentiality into visual (ie, evidence from sight) and non-visual (from another sense) direct evidence and inferred, assumed and reported indirect evidence. Now, Aikhenvald's typology has been criticized** for including only dedicated evidential morphemes*** and, apparently, basing her semantic typology on the system of Tariana, a language she's studied extensively. But, y'know, typology is hard work, especially when dealing with a somewhat ill-defined semantic notion, so props to Aikhenvald.

But the really controversial issue is what relationship evidentiality has to epistemic modality, which essentially, conveys the speaker's degree of certainty in the proposition presented.**** The controversy is understandable: these two notions are often closely linked, as in Western Germanic languages or St'at'imcets. Furthermore, some have asserted that modal judgements are implicit in evidence type, as some types of evidence (generally direct) are more valuable than others.***** But there are counterexamples: for example, in Kashaya Pomo, all evidentially marked statements are taken as certain, and in Plains Cree direct quotes (which is certainly a type of reportative) are considered very valuable evidence.

Now, a bold statement to kickstart debate: I'm with de Haan -- "Epistemic modality evaluates evidence and on the basis of this evaluation assigns a confidence measure to the speaker's utterance. ... An evidential asserts that there is evidence for the speaker's utterance but refuses to interpret the evidence in any way." (1999; italics present in the original.) I will, however, concede that a given morpheme can have both modal and evidential force. What do you think?

___

*Who's in favour of tee shirts? "TEAM LANGUAGE NERDS": I think the world is ready.

**ie, by me, in my arrogant undergrad seminar papers. But by actual, non-proto linguists, as well.

***Although, apparently, some people think that other strategies for conveying evidential force don't count or something. Oh, what a tangled web...

****See: SIL's somewhat facile treatment, Matthewson's article on St'at'imcets modality and de Haan's evidentiality homepage, if you're interested in reading some divergent viewpoints.

*****Check out Palmer and Frajzyngier's****** 1987 Truth and the compositionality principle: A reply to Palmer and 1985 Truth and the indicative sentence, both in Studies in Language, for examples of these kinds of arguments.

******Holy goodness, but that dude publishes a lot.

Saturday, March 31, 2007

How can this be possible?

So I was doing a little research on residential schools, and here's an article:

Here's the part that was new to me: "The last residential school closed in 1996."

1996? I was already shocked when I was under the impression that the last residential school closed in 1984, but 1996? Is this possible? How is it possible? How can something so blatantly racist still have been operating in Canada as recently as 11 years ago? I was alive then! I was already 11! You can't even try and (weakly) rationalize it with "Oh, back then no one realized it was wrong..."

So I went hunting through Wikipedia to see which school it was, but there're just a lot of schools listed as "closing date unknown."

Yeah, so I know this doesn't directly relate to language or linguistics, but as someone who thinks that First Nations' languages should really be studied if people really want to know how different languages can be (and correlatingly, what this UG thing really looks like), it is indirectly related.

Wednesday, March 28, 2007

Not getting what 'got' means...

(1) I got kicked.

(2) I was kicked.

(3) I just got kicked.

(4) ?I just was kicked.

(5) I was just kicked.

(6) *I got just kicked.

Tuesday, March 27, 2007

On a slightly-less-intense note...

(1) (*)It's even made by American Apparel, who believes in fair labor practices.

Now -- leaving aside the thorny issue of whether or not American Apparel is an ethical company -- to me, this is an ill-formed sentence. (Hence, the asterisk.) I much prefer one of these:

(2) It's even made by American Apparel, who believe in fair labor practices.

(3) It's even made by American Apparel, which believes in fair labor practices.

I think this is because I require "who" to refer to people. One can treat a corporation, such as American Apparel, as a group of people, in which case "who" is appropriate but, crucially, plural. (viz. sentence 2.) One can also talk about a corporation as an inanimate entity, in which case I don't permit "who" to be used.

What do you think? Native English speakers, is sentence 1 grammatical for you?

Monday, March 26, 2007

First post! Yucatec Mayan Agreement

So I thought I'd start this thing off with some linguistic research that I've been(was?) doing...

Last semester I was doing a group research project for my morphology class - the topic was cross-linguistic agreement, where the basic idea was to look at a whole bunch of languages and try to categorize the types of agreement we found according to the typology outlined in Elouazizi & Wiltschko 2006.

Here's a pdf of the poster that we presented as our final project. It deals with agreement in Yucatec Mayan (a super-cool language, btw) which while at first glance seems problematic for the typology, is actually predicted by the theoretical framework. I thought it was pretty cool...

(Note: when we were working on the poster, none of us were really good with the distinction between lexical aspect and grammatical aspect, so, er, please forgive those mistakes ...I know better now *hangs head in shame*)

And here's an introduction to the theoretical framework, because the poster kind of assumed familiarity with it, and thus lacks an intro.

START Introduction

1.0 Theoretical Framework and Assumptions

Elouizizi and Wiltschko 2006:

Under the assumption that agreement is pronominal (Ritter 1995),

Each type of agreement corresponds to a different level of representation, D-agreement mapping on to Comp, φ-agreement mapping onto Aux/Infl, n-agreement mapping onto little v, and N-agreement mapping onto V.

Each type of agreement has different distribution, different targets, different sensitivities, and different binding-theoretic properties, falling out from the category of agreement and the corresponding level of representation.

So, yeah, I think now that what we called, very vaguely, X-agreement on Aspect, might be Classifier-agreement on Aspect, since classifiers seem parallel to aspect in that they can be seen as distinguishing between mass/count nouns the same way that aspect distinguishes between imperfective/perfective. If it was the case that it was Classifier-agreement on Aspect, it might predict that this type of agreement could only show up in languages that have a Classifier Phrase....(Yucatec Mayan does have classifiers)...but I haven't been able to find the time to look into it more closely. And we couldn't figure out a way to account for Georgian agreement either. Too bad! It's way more interesting than the anthropology paper I am procrastinating from working on by posting this.

Comments? Questions? Scathing remarks?

References

Déchaine, Rose-Marie and Martina Wiltschko. 2002. “Decomposing Pronouns.” Linguistic Inquiry; Vol.3, No. 3: p. 409-442.

Elouazizi, Nouredine & Martina Wiltschko.. 2006. “The Categorical Status of Subject Verb Agreement”. Presented at the UBC Department of Linguistics Research Seminar.

McGinnis, Martha. 2001. “Semantic and Morphological restrictions in Experiencer Predicates”. In Proceedings of the 2000 CLA Annual Conference, ed. John T. Jensen & Gerard van Herk, 245-256. Cahiers Linguistiques d'Ottawa. Department of Linguistics,

McGinnis, Martha. 2005. “Phi-feature Competition in Morphology and Syntax”. Submitted to the Proceedings of the McGill Workshop on Phi-Theory, ed. Daniel Harbour, David Adger, and Susana Béjar; Ms., University of Calgary.

Ritter, Elizabeth. 1995. “On the syntactic category of pronouns and agreement”. Natural Language& Linguistic Theory 13:405–443

Tonhauser, Judith. 2003. “F-constructions in Yucatec Maya”, in: Anderssen, Jan; Menéndez-Benito, Paula &Werle, Adam (eds.), The Proceedings of SULA 2.

Travis, L. 1991. “Inner aspect and the structure of VP”. Paper presented at NELS 22.

Wunderlich, Dieter, and Martin Krämer. 1999. “Transitivity alternations in Yucatec, and the correlation between aspect and argument roles”. Linguistics 37: 431-479